Most people don't think twice about zero. It sits quietly on keyboards, appears in dates, and holds our place when we're counting. It's the nothing between something and something else. Unremarkable. Obvious.

But zero is neither unremarkable nor obvious. In fact, for most of human history, zero didn't exist at all.

This is the story of how a number that represents nothing became one of the most revolutionary ideas in mathematics, philosophy, and commerce—and why its invention was far stranger, and far more contested, than you might imagine.

The problem with nothing

Early number systems—Egyptian, Greek, Roman—had no zero. They had symbols for one, ten, a hundred, but they had no symbol for the absence of quantity. This wasn't an oversight. It was philosophical.

To the Greeks, numbers represented things. How could you have a number for nothing? Aristotle argued that the void—the concept of nothingness—was logically impossible. A number for nothing would be a contradiction.

The Romans agreed. Their numerals (I, V, X, L, C, D, M) worked perfectly well for counting soldiers, recording debts, and marking the passage of time. If you had nothing, you simply didn't write anything. The absence of a symbol was the symbol.

This worked fine—until mathematics became more complex.

Try doing long division with Roman numerals. Try calculating compound interest. Try keeping track of astronomical observations across centuries. Without a placeholder to show where a column was empty, these systems buckled under their own limitations.

Enter: India.

The invention of nothing

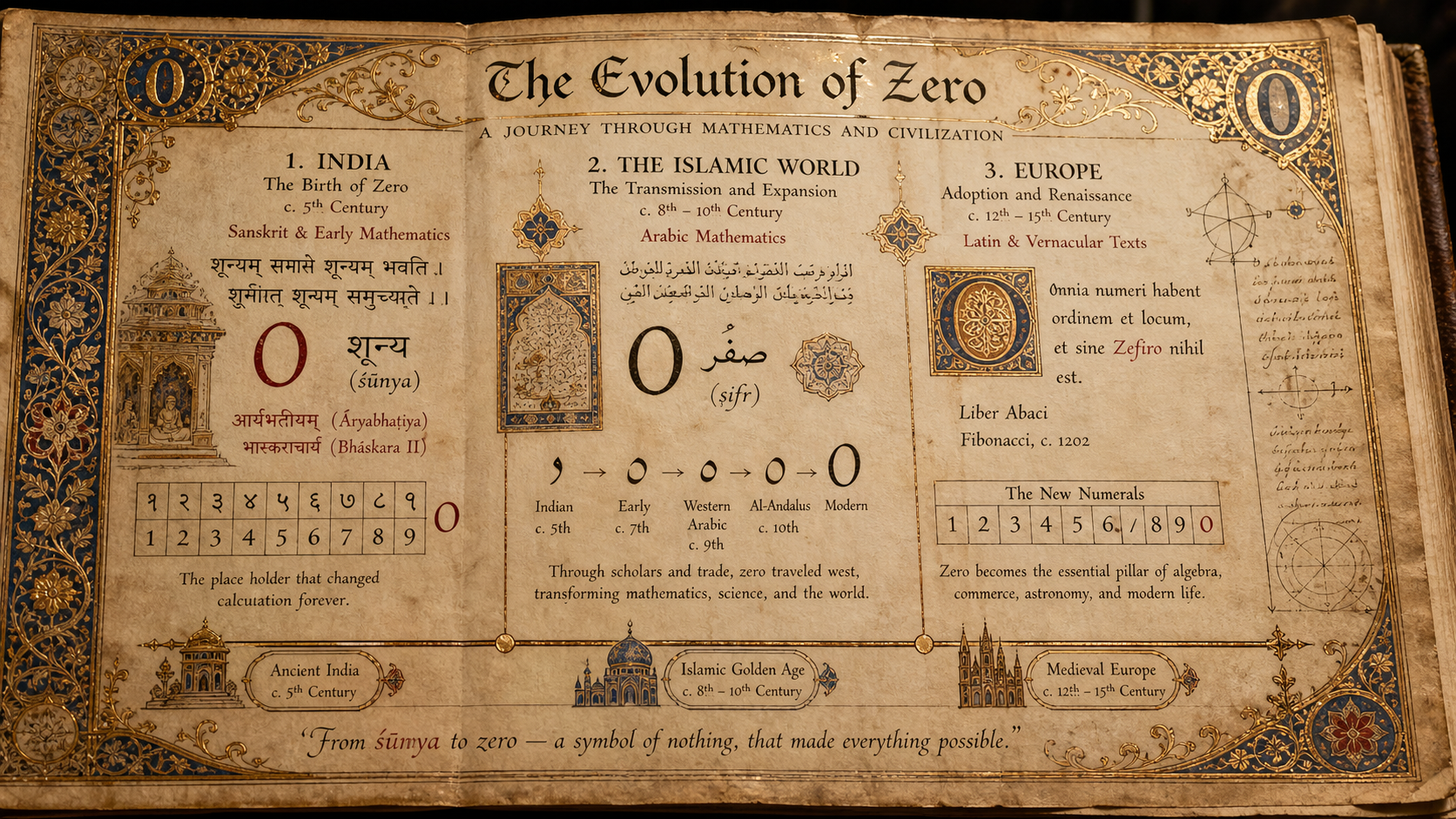

Sometime around the 5th century CE, Indian mathematicians developed a symbol for zero. They called it sunya, meaning "empty" or "void." It wasn't just a placeholder—it was a fully functional number that could be added, subtracted, and manipulated like any other.

This was revolutionary.

With zero, Indian mathematicians unlocked positional notation: the idea that a digit's value depends on its position in a number. In the number 305, the zero tells you there are no tens. Without it, you'd have no way to distinguish 35 from 305 from 3005.

Zero also enabled negative numbers, since it sat between positive and negative on the number line. And it opened the door to algebra, where equations could balance around nothing.

But India didn't broadcast this discovery to the world. For centuries, zero remained an uncommon piece of knowledge, used by astronomers and mathematicians but largely unknown outside the subcontinent.

The long journey west

Zero's journey to Europe was slow and contested.

It first traveled east to China, where it was adopted into Chinese mathematics. Then it moved west along trade routes, carried by Arab scholars who recognized its power. The Persian mathematician Al-Khwarizmi wrote extensively about zero and the decimal system in the 9th century, and his work eventually reached Europe through translation.

But Europe resisted.

Medieval European scholars, steeped in Greek philosophy and Roman tradition, were deeply suspicious of zero. How could nothing be something? The Church was particularly wary: zero felt dangerously close to the void, to chaos, to the absence of God.

Italian merchants were the first to embrace it—not for philosophical reasons, but for practical ones. The abacus and Roman numerals were clumsy tools for tracking the complex finances of Renaissance trade. The Hindu-Arabic numeral system, with its magical zero, made calculations faster, clearer, and less error-prone.

By the 13th century, Leonardo Fibonacci (yes, that Fibonacci) was championing the new system in his book Liber Abaci. Slowly, reluctantly, zero began to spread.

Even then, it took centuries for zero to be fully accepted. Some cities banned its use in official documents, fearing fraud. Others clung to Roman numerals out of tradition or pride.

It wasn't until the 16th and 17th centuries—with the rise of modern science, calculus, and precise astronomical measurement—that zero became indispensable.

Why zero still matters

Today, zero is everywhere. It's the foundation of binary code (0 and 1), the backbone of computer science, and the starting point of the Cartesian coordinate system. Without zero, there would be no calculus, no negative numbers, no algebra as we know it.

But zero is more than a mathematical tool. It's a philosophical statement.

To accept zero is to accept that nothing can be something. That absence has presence. That the space between things is as important as the things themselves.

In that sense, zero is deeply uncommon. It defies the logic of the physical world—you can't hold zero apples or point to zero trees. Yet it's essential to how we describe, measure, and understand that world.

And perhaps that's the most uncommon thing about it: zero is the most useful number that doesn't exist.

Want to go deeper?

The story of zero touches on mathematics, philosophy, religion, commerce, and cultural exchange. It's the kind of topic that refuses to stay inside a single subject.

If you're curious about how ideas like this—small, strange, and world-changing—are woven through history, you might enjoy Uncommonology. We teach the knowledge that falls through the gaps.

Start with the Free Certificate: https://uncommonology.co.uk/certificate/

Begin with Nothing

Zero began as an absence, then quietly changed the world.

Uncommonology® begins in much the same spirit: with a small act of curiosity. The Free Certificate is your first step into the study of uncommon knowledge — a short, playful introduction to strange ideas, hidden histories, and the art of noticing what ordinary education tends to leave behind.

Start with the Free Certificate and see where the uncommon leads.

Start the FREE Certificate →https://uncommonology.co.uk/certificate/